We’ve been releasing a series of articles taking you through the end-point assessment methods used in new apprenticeship assessment plans, and some of the practical issues to consider. (If you’ve missed these posts, here are insights into the professional discussion, presentation / showcase, practical assessments, multiple choice tests and the interview). First though, here’s a look at how “high-stakes” end-point assessment is starting to shape up…

Apprenticeship end-point assessment is happening. Every apprentice aiming for one of the new apprenticeship standards will face a formal end-point assessment at the end of their programme.

The differences between the continuous assessment of a framework-based apprenticeship and the end-point assessment for a standard are clear and are starting to be understood. The rigour of the end-point assessment is starting to be valued by employers, even though many current apprenticeship providers are not yet convinced.

At the same time, the role of the apprenticeship trainer-assessor is changing radically. In the old world, the focus is on training, the assembly of the portfolio and its continuous assessment. In the new, any on-programme assessment will be focused on the progress the apprentice is making, on their success in passing through the new Gateway, and ultimately on their preparation for end-point assessment.

(The Gateway is the set of requirements laid down in each apprenticeship standard that have to be met before an apprentices can attempt end-point assessment.

End-point assessment is “high stakes assessment”.

End-point assessment is tough, it’s different and it’s high-stakes.

What I mean is, that unlike framework-based apprenticeships, where there’s plenty of time to hone the portfolio, success or failure at the end-point can have fundamental bearing at the start of a person’s career or on their progression.

Also, the fact that the stakes are high could lead to misdirected effort to “train for the test” and hot-house apprentices as they approach the end of their programme.

And the apprentice will be assessed by a stranger (the independent end-point assessor) rather than by the trainer-assessor that they have built a relationship with continuously over what could be a programme of three years or more.

On the plus side we are seeing in the early tranches of end-point assessment, in industries such as digital and energy, that employers are truly valuing the rigour of the process and are feeling in many cases that apprentices are much better prepared and job ready. As more end-point assessments are done, it is hoped that this “parity of rigour” as opposed to “parity of esteem” will place apprenticeships at least alongside academic routes for employers, labour market entrants, and their parents.

Employers as assessment designers

Trailblazer employer groups have been writing assessment plans over the last five years. The guidance they have been following and their understanding of what constitutes rigorous, valid and robust assessment are still evolving. There are many options and combinations of assessment methods that may be chosen for each assessment plan. How these methods are chosen and how they put together to infer competence is down to the assessment plan designers, in other words to the employers.

Employers understand what it takes to do a job well. They are not assessment design specialists and so assessment plans developed over the last five years are variable in design, quality, usability, and affordability. Many plans are very good; they are not all great; but they do have one thing in common: both the employers and the government have signed them off. So, it’s now the job of the new end-point assessment organisations (EPAOs) and the end-point assessors they employ, to deliver rigorous, valid, robust and fair assessments for every apprentice, consistently.

Assessment plan design – the rules

Let’s take a closer look at the methods that are being used in assessment plan design. Here’s what the Institute for Apprenticeships specifies in their “Guidance for groups of employers (trailblazers) on proposing and developing a new apprenticeship standard” (www.instituteforapprenticeships.org/developing-apprenticeships/how-to-develop-an-apprenticeship-standard-guide-for-trailblazers/)

“3.3 Your EPA plan must lead to an assessment which:

- ….Uses a range of appropriate assessment methods, so apprentices can demonstrate their occupational competence across the KSBs….”

“3.7 The EPA you specify in the plan must include at least 2 distinct methods of assessment. These can include:

- practical assessments conducted in a workplace or simulated environment (the assessor will observe how the apprentice undertakes one or more tasks)

- a viva or professional discussion to assess theoretical or technical knowledge or to discuss how the apprentice approached the practical assessment and their reasoning

- a project report created after the gateway

- written and multiple choice tests

- virtual assessments, such as online tests or video evidence as appropriate to the content

3.8 You should think carefully about the fitness of different assessment methods to the occupational area and the specific KSBs to be assessed. You may want to combine assessment methods to improve their effectiveness – for example a practical test followed by a professional discussion will allow the candidate to demonstrate a range of knowledge, skills and behaviours….”

(As an aside, portfolios have never really been viewed as an assessment method. Some employer groups wanted to include an assessment of progress in skills development and so some limited portfolio concession appeared. More recently the policy to exclude portfolios has been reaffirmed and some standards now exclude portfolios compiled for training and only use those that have been compiled in the final weeks of the apprenticeship specifically for use in the end-point assessment. In the future it is probable that the use of portfolios for assessment will reduce although many assessment plans still include them.)

Under the bonnet of assessment plans

During our work with the employer groups, apprenticeship providers, and the new EPAOs, we’ve had to get under the bonnet of many assessment plans, looking at how they can be put into practice and how assessors can be prepared to deliver consistency.

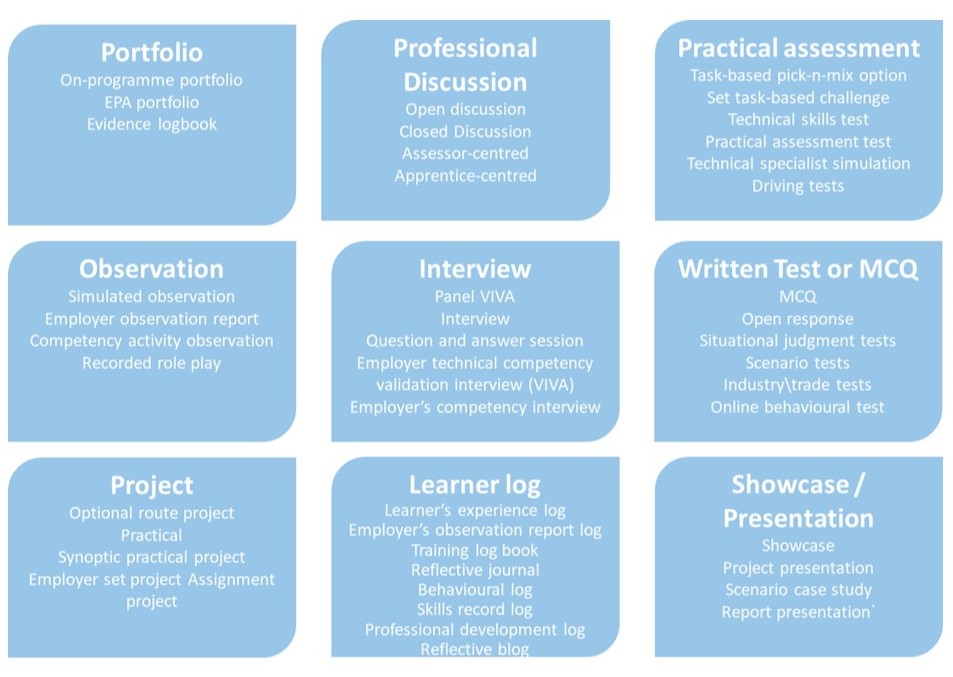

We’ve unpacked that and have come up with a more detailed breakdown of the possibilities for assessment methods.

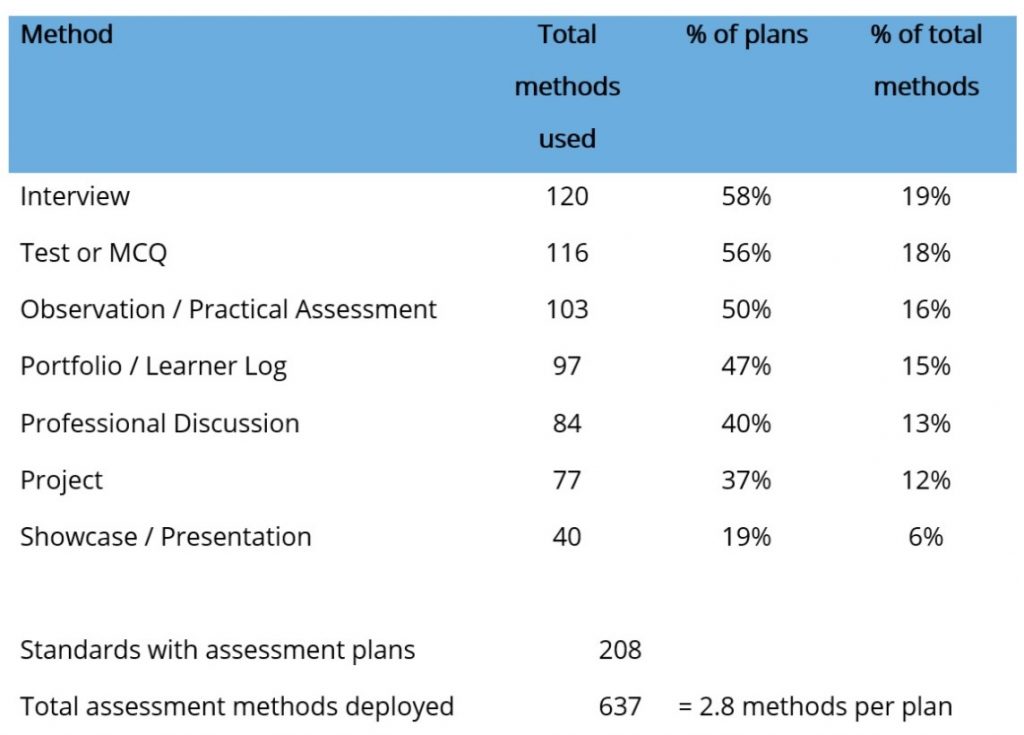

We’ve also conducted an analysis of the methods used in assessment plans. In the 208 published assessment plans a total of 637 assessment methods have been deployed. That around 2.8 per plan. The breakdown looks like this:

In this new series of blogs, will take you through each assessment method in turn, looking at some of the standards they are used in; giving you an overview of how each assessment method works; how reliable, valid and robust they can or should be; as some of the practical issues you may encounter as you use them.

Let us know if you have any comments. We’re very keen to hear from assessors who are using these methods in their end-point assessments every day. We would love to feature your experiences of how end-point assessment is working in practice in future blogs.

We’re producing a set of recorded presentations covering the main end-point assessment methods and critical areas of practice. They will be available at the beginning of March. Places are now also available on the Level 3 Award in Undertaking End-Point Assessment. You can find out more at www.strategicdevelopmentnetwork.co.uk/sdnevent

(More updates and practice can be accessed on our new End-Point Assessment LinkedIn Group and through our mailing list – feel free to join!)